Whose Doctor Does the Algorithm See?

Four new studies on the ethics, bias and equity gaps shaping AI in medical education.

There is a particular quality to early May in a Scottish university, when the corridors empty out as students hunker down for exams and the rest of us begin the long slog of marking. This year I have managed to squeeze in some travel to discuss the ethics and safety of AI use with colleagues in Cardiff and Swansea.

The focus of these conversations was about who AI serves, who it leaves out, and whether the rest of us, the educators, are even ready for the conversation. The four papers below sit precisely at that intersection. None offers a tidy answer, which is rather the point.

💼 Get access to my curated list of AI × MedEd jobs

Four new jobs in AI and MedEd have been posted in the last week. Upgrading to a Standard subscription unlocks live, curated trackers for specialised AI × MedEd academic, technical, and clinical positions.

This subscription is a professional development resource and may be tax-deductible or eligible for reimbursement by your employer.

A new framework for AI ethics, built from the ground up

Zainal and colleagues conducted semi-structured interviews with 30 early-career doctors, between one and five years post-graduation, drawn from nine public healthcare institutions in Singapore between April and June 20251. Purposive sampling across specialties, gender and ethnicity gave a reasonably diverse sample, and analysis followed Braun and Clarke’s reflexive thematic approach with the four classical bioethical principles used as sensitising concepts rather than a fixed coding frame. Sixteen of the participants had worked directly on AI projects in ophthalmology, orthopaedics, radiology and general surgery, while the rest used tools like ChatGPT and Russell-GPT informally. None had received structured AI ethics teaching at medical school. Across the interviews, seven recurring practical challenges emerged: system opacity, dataset bias and generalisability, data privacy and consent in networked environments, insufficient patient-specific contextualisation of outputs, hallucination risk, ambiguous accountability when something goes wrong, and a slow drift towards cognitive offloading.

From this analysis the authors propose the Digital-Age Clinical AI Ethics Competence (DCEC) framework, comprising four domains (epistemic awareness, relational integrity, reflexive accountability and adaptive professionalism) anchored by ethical digital literacy. Each domain is operationalised with concrete suggestions for OSCE stations, reflective portfolios and ethics vivas. The framework’s strength is that it reframes classical principlism through the realities clinicians actually encounter, rather than treating AI as a special case of confidentiality or beneficence. Its limitation, at this stage, is that it remains a conceptual proposal derived from a single jurisdiction’s qualitative data, and whether the four domains translate into measurable competencies in undergraduate or postgraduate curricula is the obvious next question. For educators outside medicine, the takeaway may simply be that domain-specific ethics teaching needs to be updated alongside the tools, not several years after them.

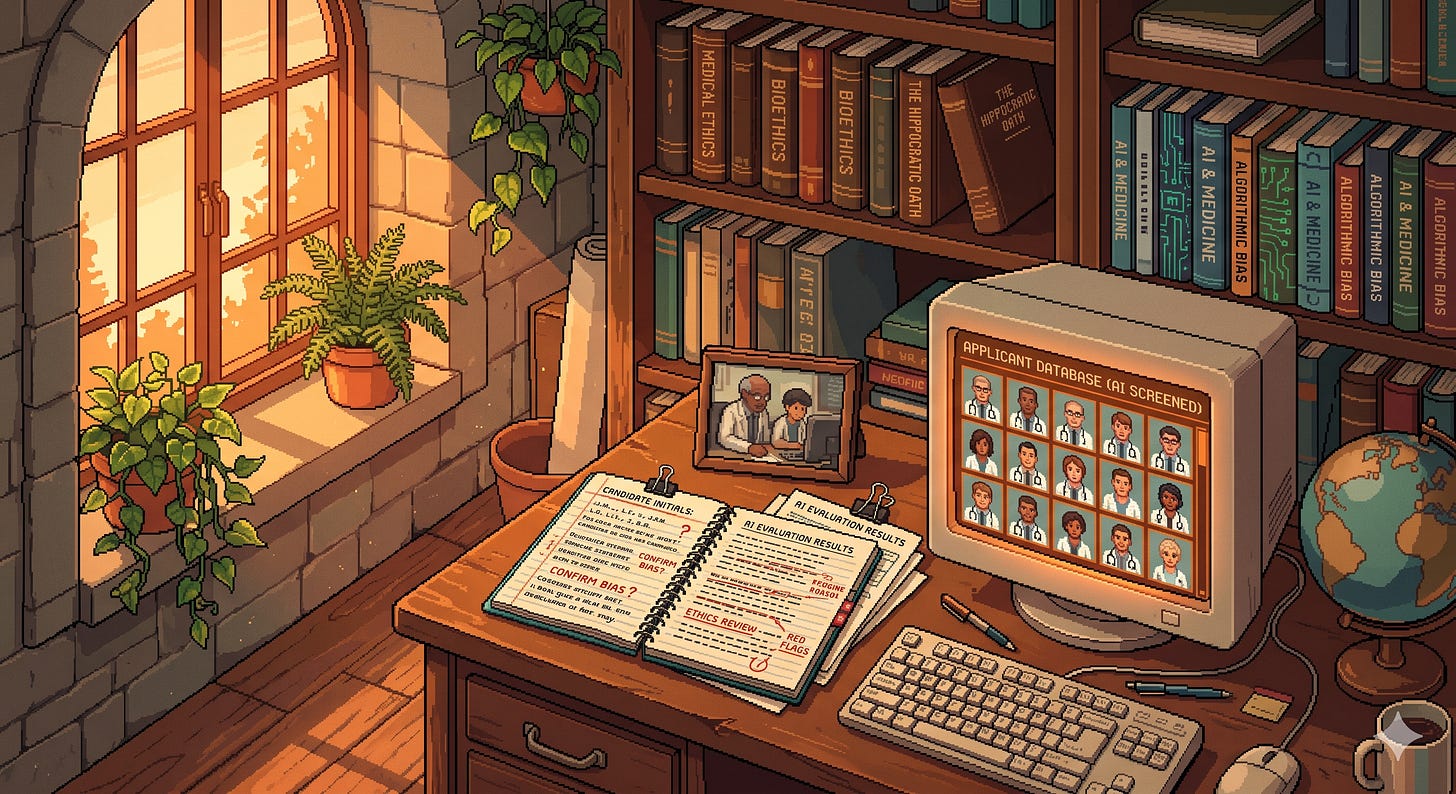

What does an AI think a doctor looks like?

Hartley and Fisher took a simple but pointed approach2. They used two free-to-use AI text-to-image generators (Microsoft Designer running OpenAI’s DALL-E 3, and Deep AI’s internally developed model) to produce 1200 images across 30 medical specialties, then compared the apparent gender distribution of the generated doctors to UK NHS England specialty trainee recruitment data from 2021 to 2023. The two authors independently classified each image as male, female or not classifiable, with disagreements resolved by discussion. Specialties accounting for less than 0.25% of recruitment were excluded, and 25 unclassifiable images (2%) were removed. Across the dataset, 82% of AI-generated doctors were male, against 47% in actual NHS trainee data, a difference significant at p<0.0001. For 28 of the 30 specialties, AI underestimated the proportion of female doctors. Strikingly, neither tool produced any images of female general practitioners, trauma and orthopaedic surgeons or urologists, while females were overrepresented in dermatology, obstetrics and gynaecology, and plastic surgery. The authors also noted a subtler “presentational” bias, with female doctors in dermatology and plastic surgery shown with enhanced facial features, makeup and jewellery in ways that male counterparts were not.

The methodology has limits worth naming. Two raters classifying gender from images is inherently reductive, the choice of free-to-use tools means newer or paid models may behave differently, the comparison conflates the gender of those entering training with the gender of doctors as the model chooses to depict them, and the authors note that algorithm updates mean a repeat study could produce different results. Even so, the magnitude of the disparity is hard to dismiss. The authors argue that visual culture in medicine matters because it signals who belongs where, and that educators using AI imagery in slides, posters or prospectuses risk amplifying stereotypes they would otherwise have spent years working to undo. The implication is uncomfortable but practical. Any educator reaching for an AI image generator to illustrate a profession should look critically at what comes back before pasting it into the next presentation.

When the marker has a linguistic preference

Yvan, Charles and Lucy’s systematic review brings together 27 studies published between 2022 and 2025 on how generative AI tools affect assessment fairness for non-native English-speaking (NNES) medical students3. The authors followed PRISMA 2020 procedures, searched ERIC, PubMed, Scopus and Web of Science, used Kane’s validity framework as their analytic lens, and assessed methodological quality with the MMAT and AXIS tools. The 27 included studies broke down into 14 quantitative, 8 mixed methods and 5 qualitative pieces. Three findings stand out. First, in six experimental studies AI detectors misclassified NNES writing as AI-generated in a median of around 56% of cases, compared with roughly 3% for native English speakers, putting NNES students at approximately 17 times the risk of a false misconduct flag on a single submission. Second, four automated scoring studies showed a systematic downward bias of between 0.5 and 1.2 standard deviations against NNES writers, the equivalent of roughly half to a full letter grade. Third, across eight student surveys totalling 2,847 medical students, 79% of NNES respondents reported considerable apprehension about AI in assessment, and 71% perceived their institution’s AI policies as ambiguous.

The authors interpret these findings through the lens of construct-irrelevant variance. If the construct of interest is clinical reasoning rather than linguistic fluency, then any score variance attributable to writing style instead of reasoning quality is a threat to validity. They argue that detection tools operating on perplexity and predictability, both of which are statistically lower in NNES writing, will systematically penalise simpler syntax even when the underlying reasoning is sound. The review has real limitations, including the fact that 78% of the included studies came from English-dominant countries, only a minority used experimental designs, and longitudinal data on actual progression outcomes are absent. The recommendations are nonetheless practical, including two-domain rubrics that separate clinical reasoning from linguistic polish, transparent institutional policies with clear appeals processes, and bias audits before any AI tool enters high-stakes assessment. For educators outside medicine the lesson generalises: any AI-mediated marking that has not been validated across linguistic subgroups is likely to encode existing inequities at scale, and to do so invisibly.

Mind the generational gap

Nguyen and Tran’s piece is an opinion paper rather than empirical work, but it crystallises a problem many of us recognise4. They name the “AI adaptation divide” as the gap in AI readiness between digital-native students, who often arrive with established AI habits, and senior faculty who, frequently through no fault of their own, have had less time and infrastructure to engage with the same tools. The divide, they argue, is not only technical. It spans culture, mindset, trust, regulation and the way ethical judgment is exercised in everyday teaching. They draw particular attention to low- and middle-income country settings, where students often use AI through informal channels while faculty face structural barriers to training and policy support, widening the divide and increasing the likelihood of unsupervised, ethically inconsistent use.

The authors propose three complementary responses: parallel AI literacy tracks tailored to faculty and students respectively, intergenerational mentoring that flows in both directions, with students contributing tool fluency and senior educators contributing clinical judgment and ethical framing, and institutional incentives that support governance, ethics review and policy alignment. As an opinion piece, the paper offers no new evidence and the individual proposals are not novel, but the framing of reciprocal mentoring is genuinely useful because it sidesteps the deficit narrative that so often surrounds faculty AI training. For educators in any discipline, the underlying point is that hierarchical norms in higher education can quietly suppress the bidirectional learning that integrating any new technology, AI included, actually requires.

What links these four papers is a recognition that AI in education is not a neutral overlay on existing practice. Zainal and colleagues show that its use in clinical settings generates ethical pressures that traditional ethics teaching does not adequately cover. Hartley and Fisher demonstrate that the images we use to depict our profession can quietly reproduce the very stereotypes we have spent years trying to dismantle. Yvan and colleagues show that the same tools we are starting to use to mark student work can systematically disadvantage anyone whose first language is not English, often invisibly. Nguyen and Tran remind us that the people doing the integrating, students and faculty alike, are not a homogeneous group. Four practical things follow. First, audit your own AI-generated outputs for bias before putting them in front of students; the simpler the prompt, the more likely the stereotype. Second, if AI is anywhere near your marking workflow, separate linguistic style from content in your rubric, and ask what subgroup validation has actually been done before the tool sees a real submission. Third, treat AI ethics as part of the curriculum’s living architecture rather than a one-off lecture, because the questions are evolving and so should the teaching. Fourth, find a junior colleague or student and ask them to show you what they actually do with AI in a typical week. The gap, when you see it, is usually instructive.

Zainal H, Chuan VT, Xiaohui X, Thumboo J, Yong FK. From principle to practice: Developing a digital-age clinical artificial intelligence ethics competence framework through early-career doctors’ experiences. Medical Education. 2026. doi:10.1111/medu.70195

Hartley A, Fisher J. Seeing is believing? Exploring gender bias in artificial intelligence imagery of specialty doctors. The Clinical Teacher. 2026;23(1):e70297. doi:10.1111/tct.70297

Yvan NJC, Charles K, Lucy AC. The impact of generative artificial intelligence tools on assessment equity for non-native English-speaking medical students: a systematic review. BMC Medical Education. 2026. doi:10.1186/s12909-026-09303-7

Nguyen NP, Tran P. The AI adaptation divide in medical education: A generational perspective. PLOS Digital Health. 2026;5(4):e0001338. doi:10.1371/journal.pdig.0001338